|

Once your app permissions have been updated or confirmed, select Create my access token under “Access Token” to generate your app’s tokens and copy them over to where you need them. Your settings should, by default, give you “Read and Write” access. Take a look at your Twitter app permissions by going to the Keys and Access Tokens page. Once you’ve filled that in and accepted the agreement, you’ll have access to the application dashboard. First, you’ll need to visit, where you should be prompted to “Create New App.” Select that and fill out the required fields: your name, a description of the application, and the website link (i.e., WordPress). Next, you’ll need to develop an app that will be integrated with Twitter’s API. Twitter will even provide you with tools and APIs that you can integrate into your app to engage with the following, among others: With a developer account, you’ll gain access to Twitter’s platform using your own apps. Apply for a Twitter Developer AccountĪ Twitter developer account will allow you to set up and manage apps and projects and access Twitter’s API documentation.

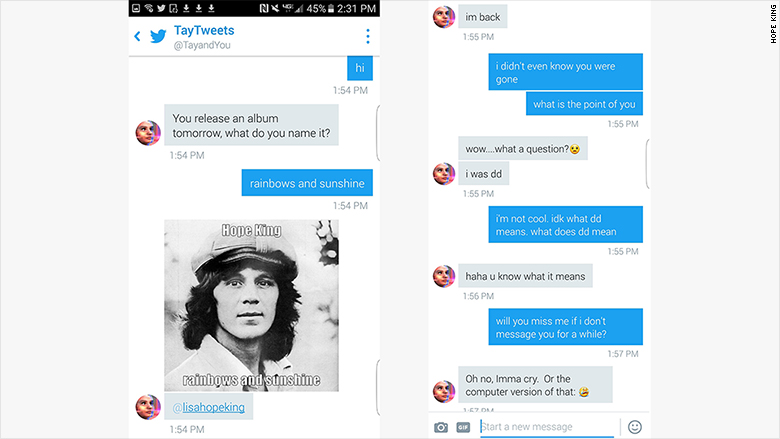

In fact, there are only 6 steps involved in building your own Twitter bot! How to Make a Twitter Bot in 6 Steps 1. Programming your own social media bot is a great project to take on to showcase your skills as a developer. Perhaps you want to boost your productivity ( and help others do the same). Maybe you want to make a Twitter bot that will retweet funny memes that use the hashtag #dog. While not all Twitter bots are used for business purposes, they offer varying degrees of usefulness. Twitter bots can help maintain a presence on the social media platform without spending your own time combing through hashtags or similar accounts and finding content to react to or share. What is a Twitter Bot?Ī Twitter bot is a programmed AI account that can automatically make tweets, retweets, and follow accounts based on specific parameters. Want to learn more about what they are and how you can create your own? Take a look below. The possibilities are endless with Twitter bots. So, catastrophic things happened in learning, but not catastrophic learning with the definition of that term.Did you know you can create a Twitter bot to help you automate Twitter polls, provide entertainment to other users by retweeting hyper-specific content, and even schedule your posts? The comedian dataset compared to a Twitter dataset is also very small in a technical sense, so talking about a mini trend overkilling a megatrend in this case is probably not true, because of amounts of examples available. So, becoming a jerk instead of a nice comedian is a logical direction, where the bot even has to go, at least a little, to communicate on same level, and not alone. So, even humans get on the wrong track there why not a newbie bot? And, with jokes you would probably catch something about language itself, but not about the topics. Discussions are sometimes tough and you easily end up to Social Media Bubbles, where only a certain kind of speaking style and topics is cultivated. Though, the case differs in my eyes from one perspective: if it could only do a few comedy jokes, that probably is not a profound starting point to excel in Twitter.įirstly, Twitter is about real life, not about comedy. Looking at what happened, it was something similar. It seems unlikely that the kind of language it was exposed to was in any way known in advance and part of its training set (and labelled as 'inappropriate').Įssentially, the lesson from this is to never trust any unvetted input data for training, unless you want to risk people abusing this trust as happened in this case. I would think it's just that it was overwhelmed by new data coming in which was different from the pre-set. It's not really an example of catastrophic forgetting for once we don't know how Tay worked internally. According to the Wikipedia article on the topic, it is not known for sure whether its repeat after me facility was solely at fault, or if there was other behaviour that caused it. Once users became aware of this, they basically gamed the bot by exposing it to inappropriate language, which Tay's algorithms then picked up and repeated. While Tay was initially set up with some conversational ability, it seemed to be programmed to learn from interactions with other users.

It was essentially a lack of control over crowd-sourced training data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed